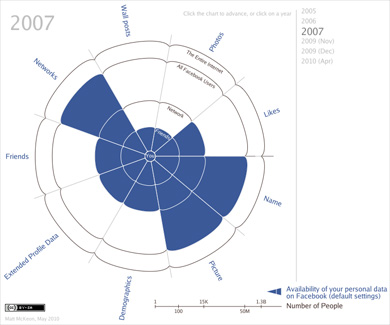

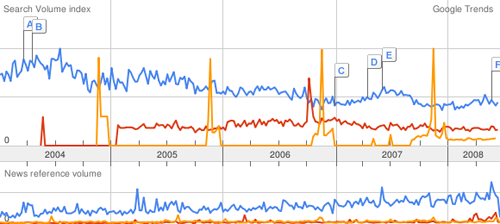

Excellent article from the Boston Globe Sunday Magazine on how parents of teenagers are handling their over-connected kids. Cell phones, text messaging, instant messaging, Facebook, MySpace, and to a lesser extent (for this age group) email mean that a lot of information and conversation is shared and exchanged. And as with all new technologies, it can all be tracked and recorded, and more easily spied upon. (More easily meaning that a parent can read a day worth of IM logs in a fairly quick sitting—something that couldn’t be done with a day’s worth of telephone conversations.) There are obvious and direct parallels to the U.S. government monitoring its own citizens, but I’ll return to that in a later post.

The article starts with a groan:

One mom does her best surveillance in the laundry room. Her teenage son has the habit of leaving his cellphone in the pocket of his jeans, so in between sorting colors and whites, she’ll grab his phone and furtively scroll through his text messages from the past week to see what he’s said, whom he’s connected with, and where he’s been.

While it’s difficult to say what this parent was specifically hoping to find (or what they’d do with the information), it worsens as it sinks to a level of cattiness:

Sometimes, she’ll use her own phone to call another mom she’s friendly with and share her findings in hushed tones.

Further in, some insight from Sherry Turkle:

MIT professor Sherry Turkle is a leading thinker on the relationship between human beings and technology. She’s also the mother of a teenage girl. So she knows what she’s talking about when she says, “Parents were not built to know the kinds of things that technology makes possible.”

(Emphasis mine.) This doesn’t just go for parents, it’s a much bigger issue of spying on the day-to-day habits and ramblings of someone else. This is the same reason why you should never read someone’s email, like a significant other, a spouse, a friend. No matter how well you know the sender and recipient, you’re still not them. You don’t think like them. You don’t see the world the way they do. You simply don’t have proper context, nor the understanding of their relationship with one another. You probably don’t even have the entire thread of even just this one email conversation. I’ve heard from friends who read an email belonging to their significant other, only to wind up in tears and expecting the worst.

This scenario never ends well: you can either keep it in and remain upset, or you can confront the person. In which case, one of two things will happen. One, that your worst fear will be true (“he’s cheating!”) and you’ll be partially indicted in the mess because you’ve spied (“how could you read my email?”), and you’ve lost the moral high ground you might otherwise have had (“I can’t believe you didn’t trust me”). Or two, that you’ve blown something out of proportion, and destroyed the trust of that person: someone that you cared about enough to be concerned to the point of reading their private email.

Returning to the article, one of the scenarios I found notable:

…there’s a natural desire, and a need, for teenagers to have their own parent-free zone as they get older.

As a graduating senior at Cambridge Rindge and Latin, Sam McFarland is grateful his parents trusted him to make the right decisions once he had established himself as worthy of the trust. A few of his friends had parents who were exceedingly vigilant. The result? “You don’t hang out at those kids’ houses as much,” Sam says.

So there’s something fascinating about this—that not only is it detrimental to your kid’s development to be overly involved, but that it presents a socialization problem for them because they become ostracized (even if mildly) because of your behavior.

And when parents confront?

When one of his friends was 14, the kid’s parents reprimanded him for something he had talked about online. Immediately, he knew they had been spying on him, and it didn’t take long for him to determine they’d been doing it for some time.” He was pretty angry,” Sam says, “He felt kind of invaded.” At first, his friend behaved, conscious that his parents were watching his every move.” But then it reached a tipping point,” Sam says. “He became so fed up about it that, not only didn’t he care if they were watching, but he began acting out, hoping they were watching or listening so he could upset them.”

I’m certain that this would have been my response if my parents had done something like this. (As if teenagers need something to fuel their adversarial attitude toward their parents.) But now you have a situation where a reasonably good kid has made an active decision to behave worse in response to his parents’ mistrust and attempt to rein him in.

The article doesn’t mention what he had done, but how bad could it have been? And that is the crux of the situation: What do these parents really expect to find, and how can that possibly be outweighed by breaking that bond of trust?

It’s also easy to spy, so one (technology savvy) parent profiled goes with what he calls his “fear of God” speech:

Greg warned them, “I can know everything you’re doing online. But I’m not going to invade your privacy unless you give me a reason to.”

By relying on the threat of intervention rather than intervention itself, Greg has been able to avoid the drawbacks that several friends of mine told me they experienced after monitoring their teenagers’ IM and text conversations. These are all great, involved parents who undertook limited monitoring for the right reasons. But they found that, in their hunt for reassurance that their teenager was not engaging in dangerously bad behavior, they were instead worn down by the little disappointments – the occasional use of profanities or mean-spirited name-calling – as well as the mind-numbing banality of so much teen talk.

And that’s exactly it—tying together the points of 1) you’re not in their head and 2) what did you expect to find? As you act out in different ways (particularly as a teenager), you’re trying to figure out how things fit. Nobody’s perfect, and they need some room to be their own age, particularly with their friends. Which made me particularly interested in this quote:

Leysia Palen, the University of Colorado professor, says the work of social theorist Erving Goffman is instructive. Goffman talked about how we all have “front-stage” and “backstage” personas. For example, ballerinas might seem prim and perfect while performing, only to let loose by smoking and swearing as soon as they are behind the curtain. “Everyone needs to be able to retreat to the backstage,” Palen says. “These kids need to learn. Maybe they need to use bad language to realize that they don’t want to use bad language.

Unfortunately the article also goes astray with its glorification of the multitasking abilities of today’s teenagers:

On an average weeknight, Tim has Facebook and IM sharing screen space on the Mac outside his bedroom as he keeps connected with dozens of friends simultaneously. His Samsung Slider cellphone rests nearby, ready to receive the next text message…Every once in a while, he’ll strum his guitar or look up at the TV to catch some Ninja Warrior on the G4 network. Playing softly in the background is his personal soundtrack that shuffles between the Beatles and a Swedish techno band called Basshunter. Amid all this, he is doing his homework.

Yes, in truly amazing fashion, the human race has somehow evolved in the last ten years to be capable of effectively multitasking between this many different things at once. I don’t understand why people (much less parents) buy this. We have a finite attention span, and technology suggests ways to carve it up into ever-smaller slices. I might balance email, phone calls, writing, and watching a Red Sox game in the background, but there’s no way I’m gonna claim that I’m somehow performing all those things at 100%, or even that as I focus in on one of them, I’m truly 100% at that task. Those will be my teenagers in the sensory deprivation tank while they work on Calculus and U.S. History.

And to close, a more accurate portrayal of multitasking:

It’s not uncommon to see two teenage pals riding in the back of a car, each one texting a friend somewhere else rather than talking to the friend sitting next to them. It’s a throwback to the toddler days, when kids engage in parallel play before they’re capable of sustained interaction.